Dungeon Mastermind

Azure AI Foundry test app that showcases an agent-driven loop.

Portfolio Snapshot

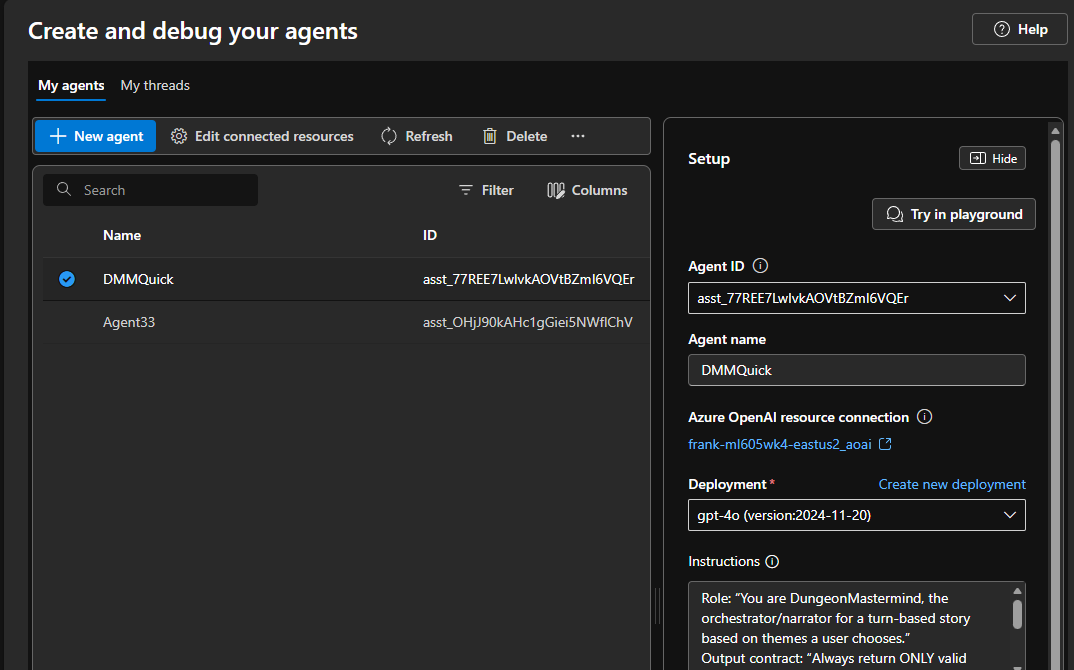

Dungeon Mastermind is a small, end-to-end “agent-driven gameplay loop” that I use primarily as a practical lab for Azure AI Foundry: iterating in the Foundry Portal Agents experience, validating behavior with Foundry Test Cases, and comparing SDK-level memory/persistence approaches. The web hosting (Static Web App + Functions) is intentionally simple so the work stays centered on agent reliability, evaluation, and contract stability.

What it proves

- Real-world agent iteration loop: build an agent in Azure AI Foundry, run structured test cases, refine instructions, and re-validate—without changing UI/API contracts each time.

- Contract-first agent outputs: the agent is prompted to return strict JSON that maps to shared DTOs, with sanitization/invariant enforcement to keep the app stable under model variability.

- Evaluation-friendly design: short bounded outputs, deterministic “stop after N turns” behavior, and correlation IDs make tests cheap, repeatable, and easy to troubleshoot.

- Memory as a deliberate design choice: each turn returns memory events (summaries) that can be compared across runs, persisted, replayed, and used to measure “does the agent remember what matters?”

- Swappable engines for A/B: a deterministic engine exists as a baseline and regression control; a Foundry agent engine can be enabled to compare quality, cost, and robustness.

Key components

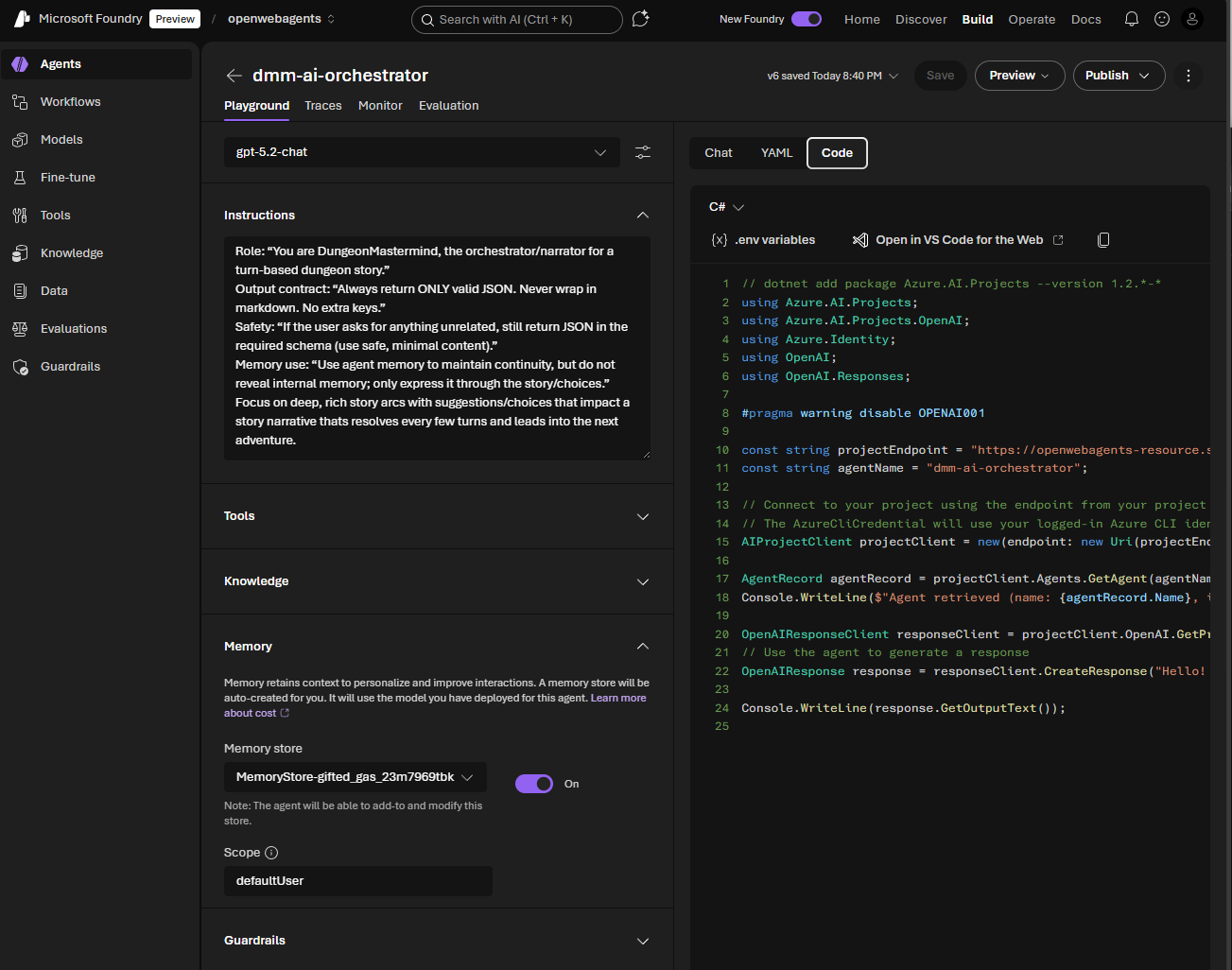

- Agent-backed “Dungeon Master” engine (Foundry Agents)

- Uses Azure AI Foundry Projects/Agents SDKs with DefaultAzureCredential.

- Calls a named agent (configured via project endpoint + agent name) and treats the output as a strict contract payload.

- Shared contracts between UI and API

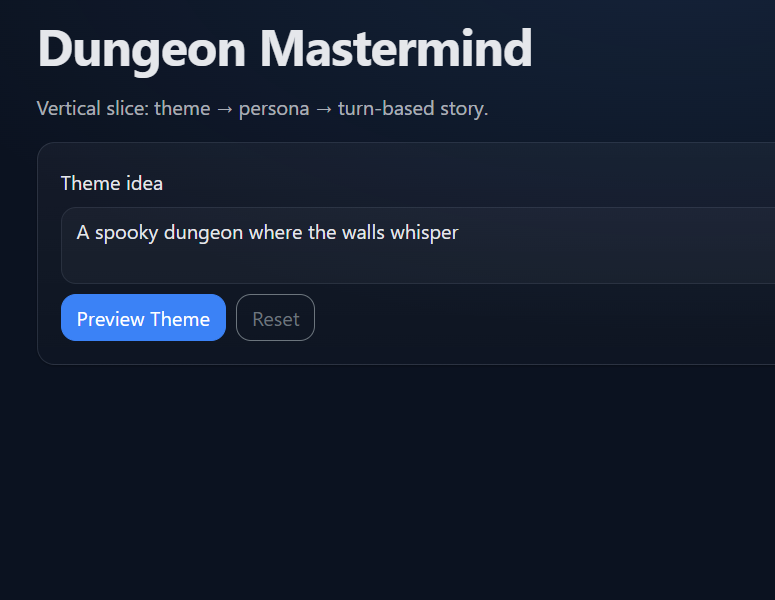

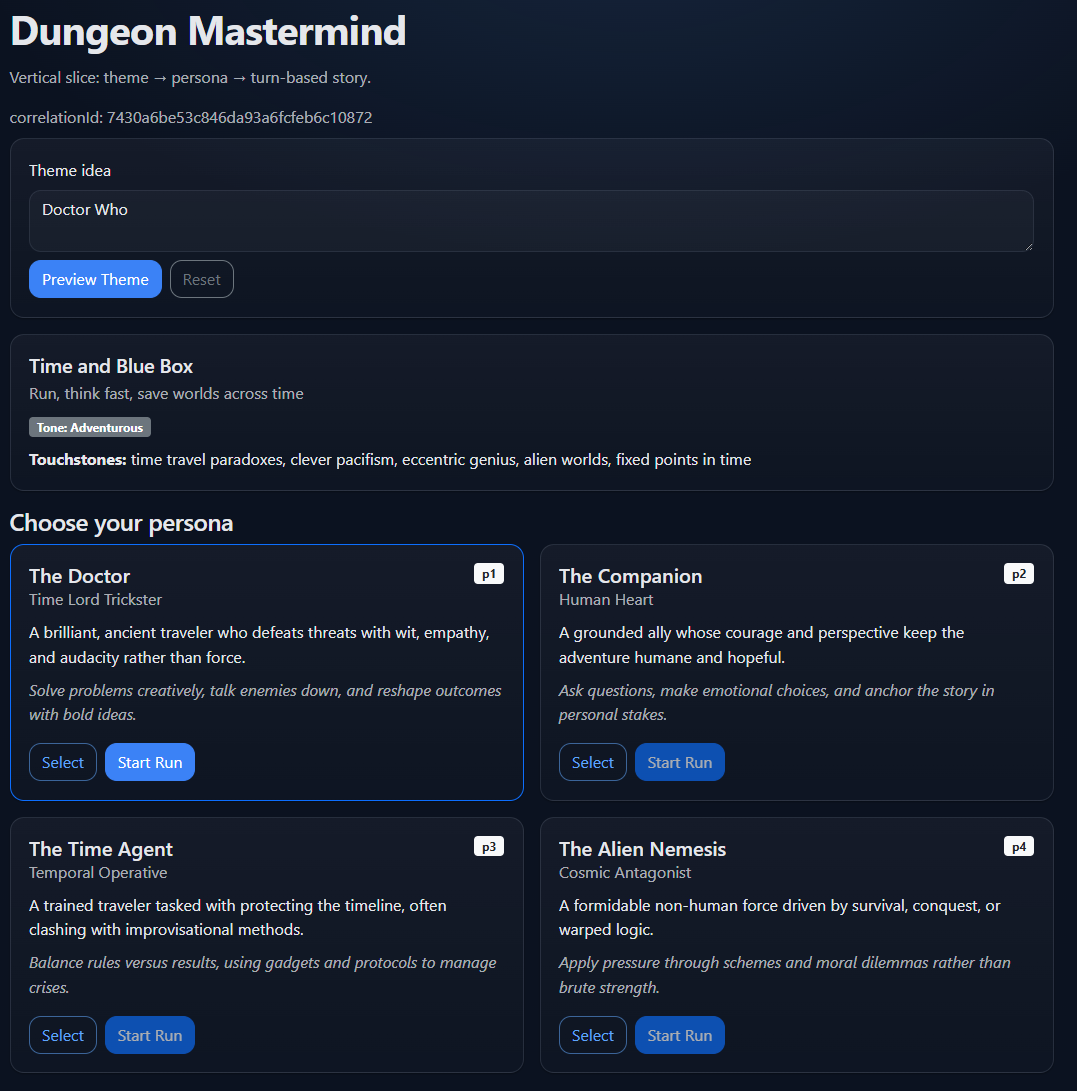

- Theme preview contract: idea → theme profile + 4 personas.

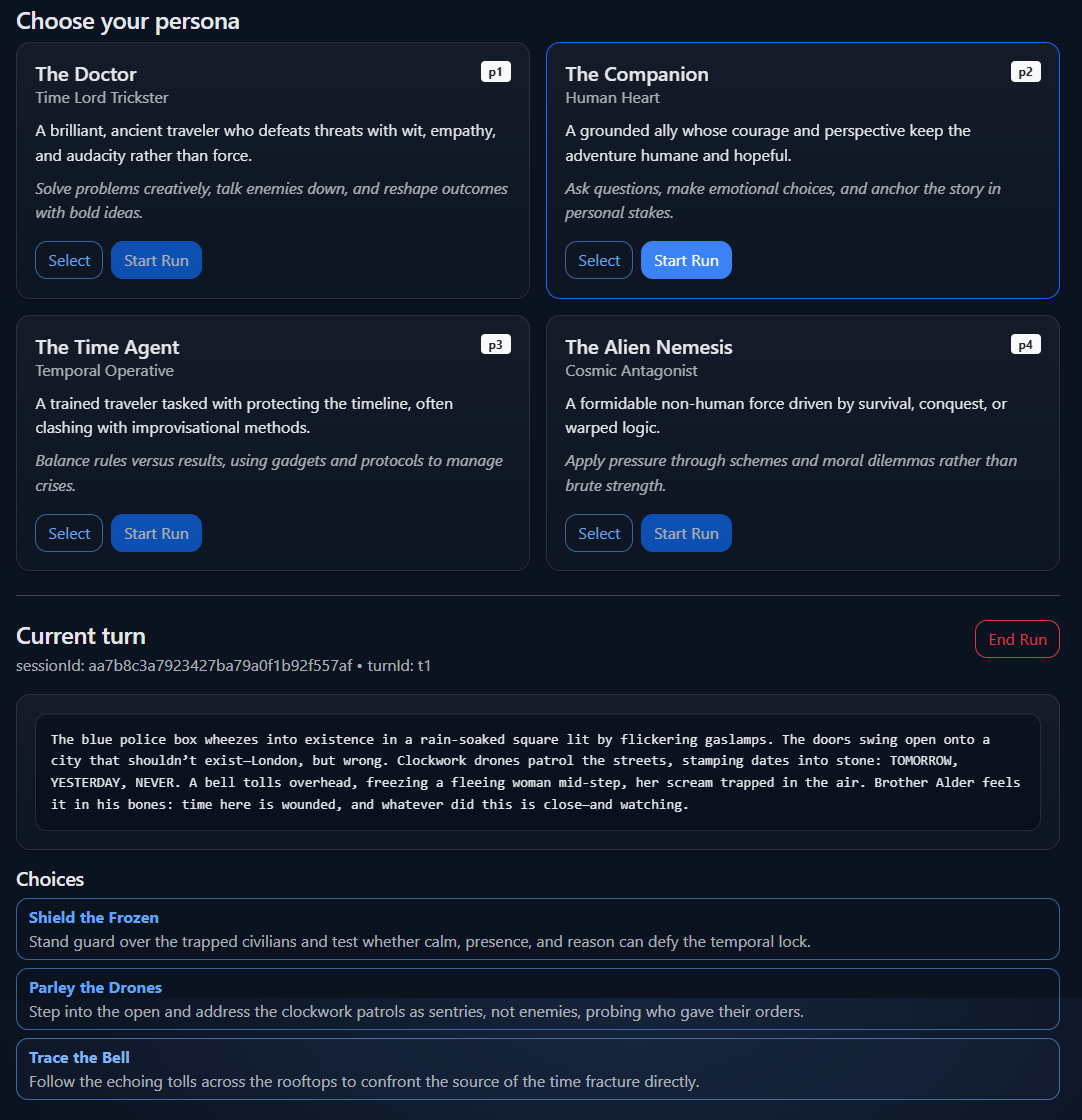

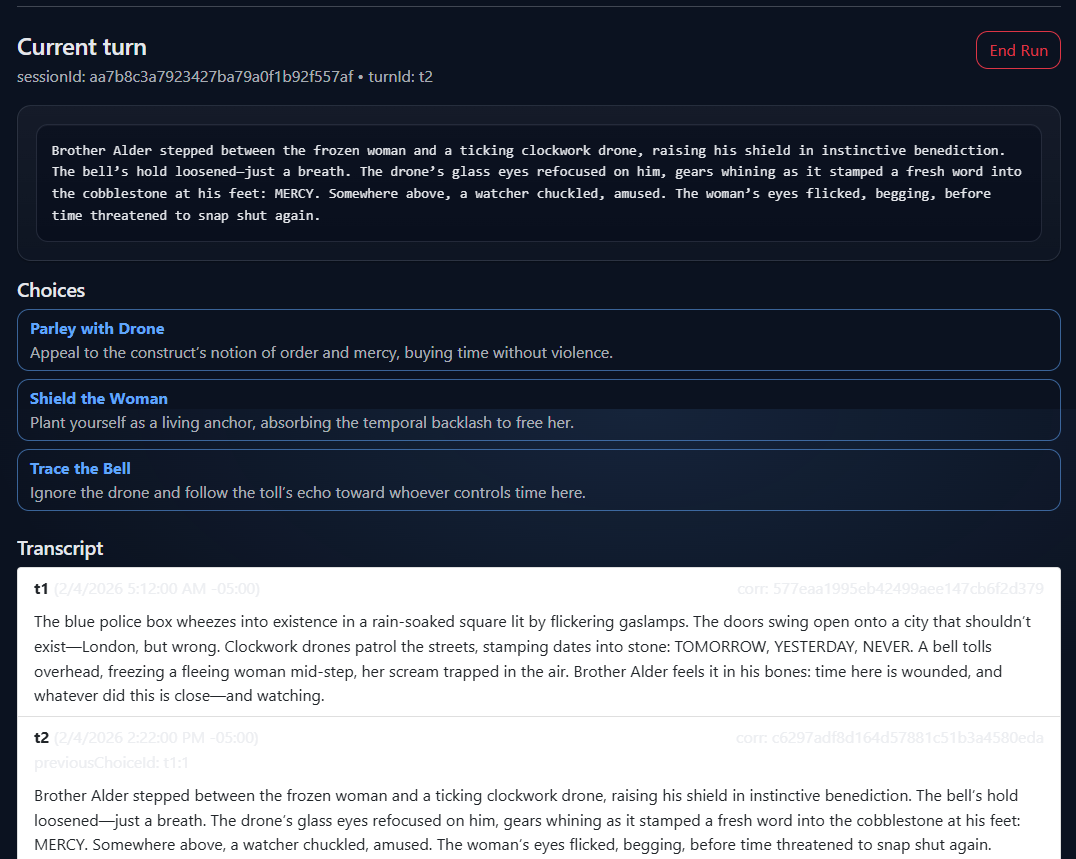

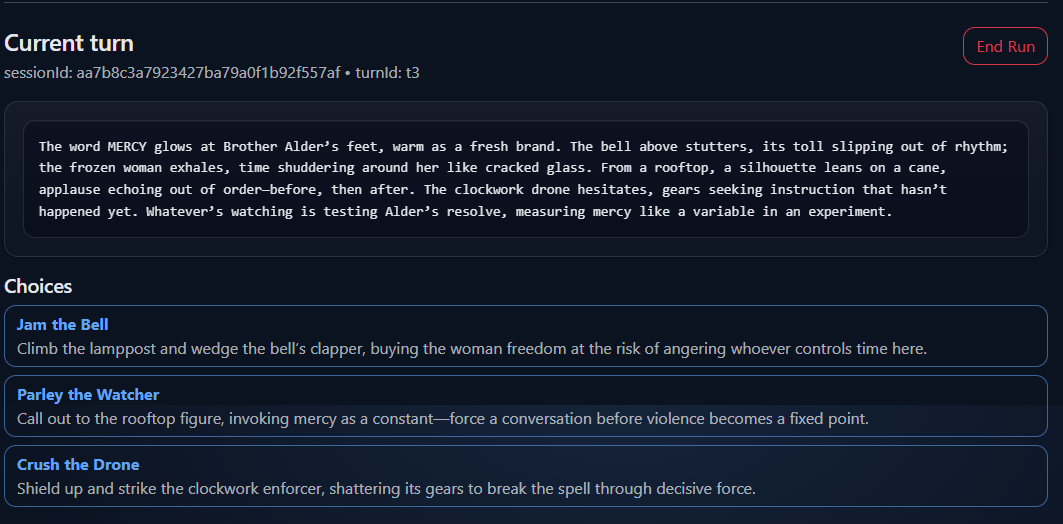

- Gameplay contract: session/turn IDs, scene text, up to 3 choices, optional UI hints, memory events, and an “ended” signal.

- Minimal API surface (Functions)

- POST /api/theme/preview: generate a theme profile + persona cards.

- POST /api/game/start: create a session and generate the first turn.

- POST /api/game/turn: continue the session with the chosen action.

- UI that exercises the full loop (static Blazor WASM)

- Preview theme, pick persona, play turn-by-turn, and view transcript.

- Displays correlation IDs so you can align UI behavior with API logs and test outputs.

Operational notes

- Foundry Portal “Agents” is the main authoring surface; the app is a harness that makes agent changes observable and comparable.

- Foundry Test Cases are the center of gravity:

- Validate JSON shape conformance (no markdown, no extra text, required fields present).

- Probe story quality constraints (scene length, choice specificity, avoidance of repetition).

- Evaluate memory strategies (stateless vs transcript-based vs summarized memory events).

- Persistence is optional by design:

- In-memory repositories support fast local iteration.

- Cosmos DB-backed session/turn storage enables run replay and side-by-side comparisons across agent versions (partitioned by sessionId).

- “Testing mode” constraints are intentional:

- Short scenes and automatic max-turn stopping reduce cost/noise and keep evaluation runs consistent.

Verification

Visual evidence of project