AAII — Phase 2 - MCP Server, Jupyter Notebooks

Public-safe, end-to-end path from Terraform IaC → GitHub OIDC → containerized API deployment, with a local Blazor UI to exercise the API.

Portfolio Snapshot

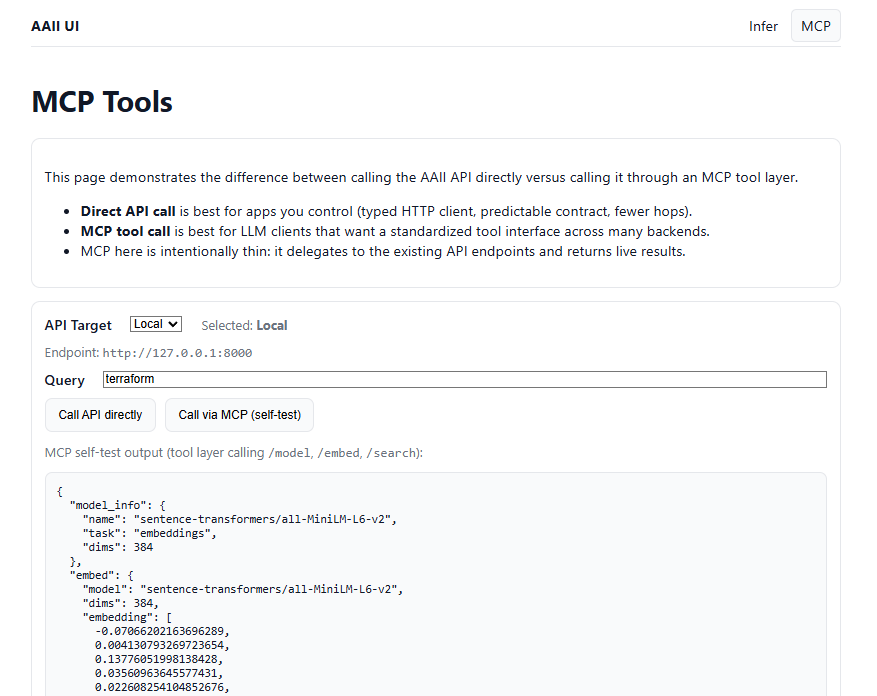

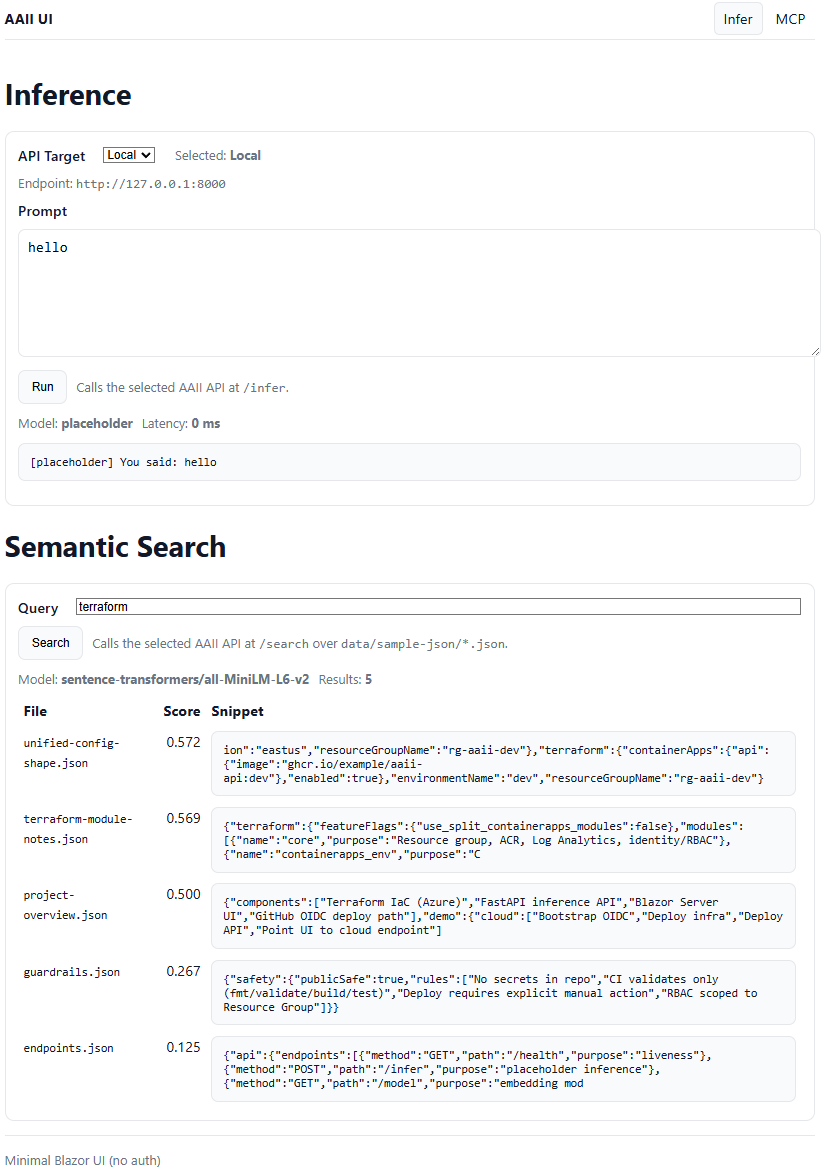

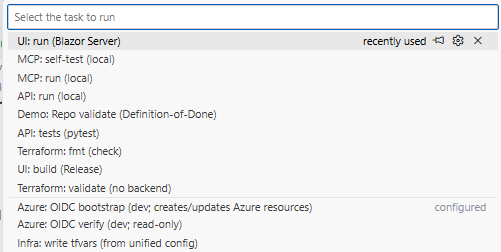

Phase 2 extends the AAII platform with an AI tooling layer, exposing the deployed inference and semantic search capabilities through MCP (Model Context Protocol), notebooks, and a comparative UI. This phase focuses on LLM-facing interfaces, evaluation workflows, and AI system ergonomics, rather than infrastructure.

What it proves

- Ability to expose AI capabilities as standardized tools for LLM clients (MCP), not just HTTP APIs.

- Practical understanding of when to use direct APIs vs tool-mediated calls.

- Experience designing AI systems that are inspectable, testable, and evaluation-friendly.

- Comfort working across API, tool protocol, UI, and notebook-based analysis layers.

Key components

- Minimal MCP server delegating to live AAII API endpoints (/model, /embed, /search).

- MCP tool surface for semantic search and embeddings over a real JSON corpus.

- Blazor UI demonstrating side-by-side:

- direct API calls

- MCP tool-layer calls

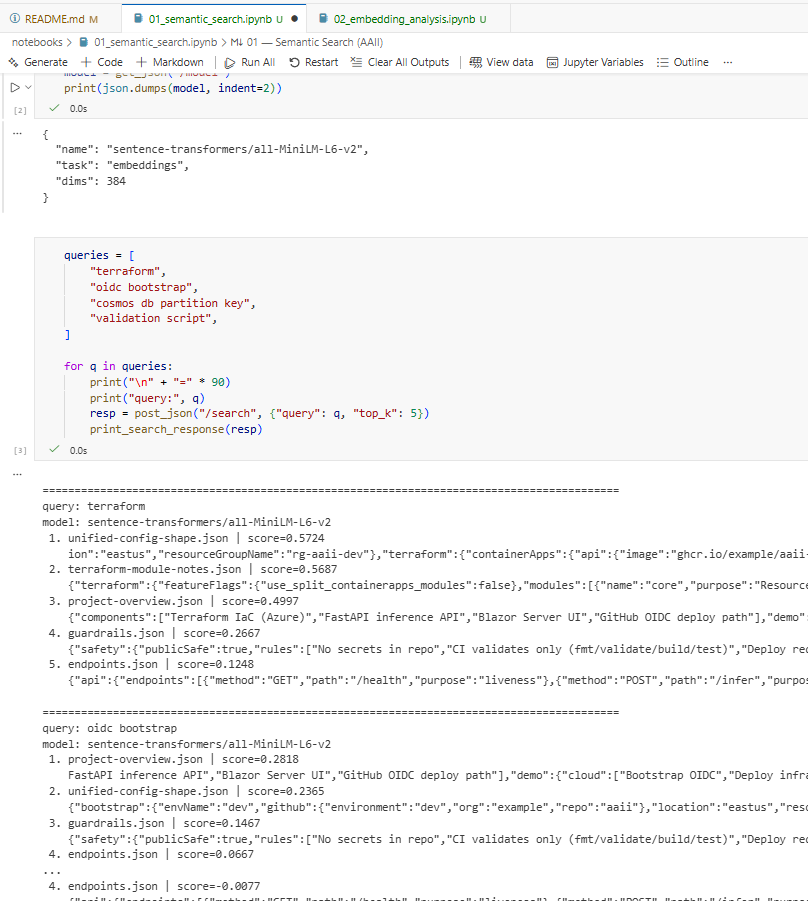

- Jupyter notebooks for:

- embedding inspection and similarity analysis

- semantic search validation and ranking behavior

- CPU-friendly transformer embeddings with deterministic outputs and cached document vectors.

Operational notes

- MCP layer is intentionally thin (no duplicated business logic).

- All tooling runs against the same deployed API used in Phase 1.

- Notebooks and MCP tools are designed for learning, debugging, and evaluation—not production automation.

Verification

Visual evidence of project